Emerging Research Directions: Difference between revisions

| Line 56: | Line 56: | ||

== 7.4 Energy-Efficient Edge Architectures == | == 7.4 Energy-Efficient Edge Architectures == | ||

The exponential growth of Internet of Things (IoT) devices, combined with the rise of artificial intelligence (AI) and advanced communication networks like 5G and 6G, is driving the expansion of edge computing. In this model, data processing shifts from centralized cloud data centers to nodes closer to the data source or end-users. This architectural shift reduces network latency, optimizes bandwidth usage, and enables real-time analytics. However, distributing processing across many geographically dispersed devices also has significant implications for energy consumption. While large data centers have been extensively studied for energy efficiency, smaller edge nodes, such as micro data centers, IoT gateways, and embedded systems, also contribute notably to carbon emissions. | |||

The exponential growth of | |||

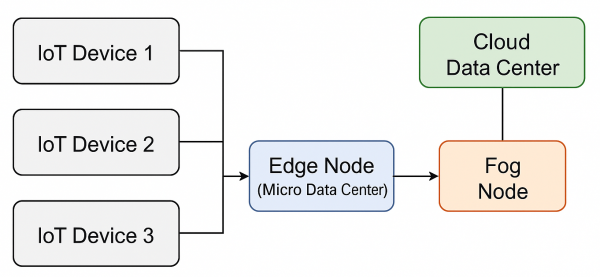

=== High-Level Edge-Fog-Cloud Architecture === | === High-Level Edge-Fog-Cloud Architecture === | ||

Modern IoT and AI systems often rely on an edge-fog-cloud architecture. Data collected by IoT sensors typically undergoes initial processing at edge nodes or micro data centers. This local processing minimizes the data volume that must be sent to the cloud, thereby reducing network congestion and latency. Intermediate fog nodes can then aggregate data from multiple edge devices for further analysis or buffering, while centralized cloud data centers handle large-scale storage and intensive computational tasks. | |||

[[File:arch.png|600px|thumb|center| Simplified edge–fog–cloud architecture. IoT devices collect data | [[File:arch.png|600px|thumb|center| Simplified edge–fog–cloud architecture. IoT devices collect data | ||

| Line 71: | Line 69: | ||

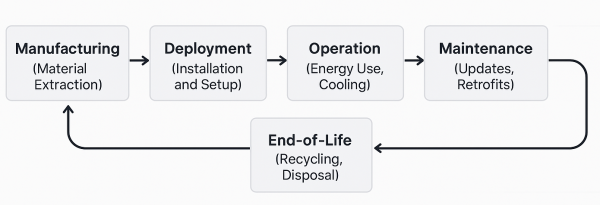

=== Lifecycle of an Edge Device === | === Lifecycle of an Edge Device === | ||

Evaluating the carbon footprint of edge devices requires considering their entire lifecycle. The manufacturing phase often involves substantial energy consumption and the use of raw materials. During deployment, the energy efficiency of operation including effective cooling, is critical. Ongoing maintenance and updates can extend a device's lifespan, while end-of-life disposal or recycling presents further environmental challenges. Each stage of the lifecycle offers opportunities for reducing carbon emissions through measures such as modular upgrades, the use of recycled materials, and environmentally responsible disposal practices. | |||

[[File:flow.png|600px|thumb|center| Lifecycle stages of an edge device. Each | [[File:flow.png|600px|thumb|center| Lifecycle stages of an edge device. Each phase, from material | ||

extraction and manufacturing to final | extraction and manufacturing to final disposal, impacts the overall carbon | ||

footprint. Interventions such as using recycled materials, adopting modular | footprint. Interventions such as using recycled materials, adopting modular | ||

components, and extending product lifespans can substantially reduce | components, and extending product lifespans can substantially reduce environmental impact.]] | ||

=== Hardware-Level Approaches === | === Hardware-Level Approaches === | ||

Efforts to reduce the carbon footprint at the edge have explored various hardware-focused strategies. These include the design of system on-chips (SoCs) with ultra-low power states and selective core activation, and the use of heterogeneous multicore processors that activate only the necessary cores to meet performance demands. The development of custom AI accelerators has also shown promise in significantly lowering power consumption during neural network inference. In addition, sustainable manufacturing practices and the incorporation of recycled materials, along with innovative packaging solutions inspired by biological systems and compact liquid-cooling methods, contribute to reducing overall energy use. | |||

| Line 123: | Line 118: | ||

=== Software-Level Optimizations === | === Software-Level Optimizations === | ||

Energy-aware software design | Energy-aware software design plays a critical role in enhancing sustainability at the edge. Techniques such as dynamic voltage and frequency scaling (DVFS) adjust system performance based on workload fluctuations, thereby improving power efficiency. Advances in DVFS now include adaptive approaches that incorporate machine learning to fine-tune voltage and frequency settings dynamically. Additionally, task scheduling algorithms that distribute computing workloads among heterogeneous IoT gateways balance performance, latency, and energy consumption. Partial offloading techniques have also emerged, where computationally intensive parts of a task are offloaded to specialized infrastructure while simpler processes are handled locally. Lightweight containerization further minimizes resource overhead during deployment. | ||

Partial offloading techniques have also | |||

{| class="wikitable" style="width:100%; text-align:left;" | {| class="wikitable" style="width:100%; text-align:left;" | ||

| Line 163: | Line 156: | ||

=== System-Level Coordination and Policy Frameworks === | === System-Level Coordination and Policy Frameworks === | ||

Reducing the carbon footprint in edge computing requires coordinated efforts across hardware, network, and management layers. Integrated edge-fog-cloud architectures facilitate workload migration across nodes based on resource availability and carbon intensity variations. Adaptive networking protocols, such as those that enable sleep modes during off-peak hours or consolidate workloads among neighboring gateways, further enhance energy efficiency. AI-based methods now play an increasingly important role by predicting resource utilization and carbon intensity to enable proactive power management. | |||

Policy and | Policy and regulatory frameworks also contribute significantly by promoting standardized carbon footprint metrics and setting minimum energy efficiency levels for edge devices. Incentives like carbon credits and tax benefits support the transition to greener technologies, while eco-design principles—such as modular battery packs and real-time energy monitoring—help extend hardware lifespans and reduce electronic waste. | ||

=== Key Strategies for Reducing Carbon Emissions === | === Key Strategies for Reducing Carbon Emissions === | ||

Effective strategies for mitigating carbon emissions in edge computing span multiple system layers. On the hardware front, deploying ultra-low-power SoCs, optimizing chip layouts, and adopting innovative packaging materials can substantially reduce power consumption. Complementary software approaches include power-aware scheduling, partial offloading of computational tasks, and containerized orchestration with minimal resource overhead. AI-driven coordination helps predict workload spikes, carbon intensity variations, and thermal thresholds, allowing systems to scale resources proactively. | |||

Integrated measures for carbon footprint reduction also involve incorporating localized renewable energy sources—such as solar or wind power—at edge sites. Although practical deployment may face challenges in certain regions, these initiatives, together with supportive government policies and industry standards, are pivotal in driving sustainable practices across the sector. | |||

{| class="wikitable" style="width:100%; text-align:left;" | {| class="wikitable" style="width:100%; text-align:left;" | ||

| Line 205: | Line 198: | ||

=== Open Challenges === | === Open Challenges === | ||

Despite | Despite significant progress, challenges remain. The heterogeneity of edge devices complicates the implementation of uniform energy-saving strategies. The inconsistent availability of energy monitoring and carbon-intensity data across different regions hinders real-time optimizations. Additionally, critical applications such as autonomous vehicles or healthcare systems face trade-offs between reliability and energy efficiency, where any disruption or delay is unacceptable. Diverse policy frameworks across regions further complicate regulatory compliance for global operators. Finally, security and privacy concerns related to AI-driven power management and data collection must be addressed to prevent potential vulnerabilities. | ||

=== Future Directions === | === Future Directions === | ||

Emerging approaches such as federated learning for energy management offer promising avenues for distributed collaboration without compromising data privacy. Cross-layer co-design—integrating hardware, operating system functions, and application-level optimizations—could yield greater efficiency improvements than isolated strategies. The development of dynamic carbon-aware energy markets, where edge nodes schedule tasks based on real-time energy prices and carbon intensity, presents an innovative framework for sustainable resource allocation. Standardized metrics and benchmarking tools, akin to data center Power Usage Effectiveness (PUE), are also needed to enable consistent comparisons and drive further advancements in edge computing sustainability. | |||

== 7.5 Data Persistence == | == 7.5 Data Persistence == | ||

Revision as of 15:48, 3 April 2025

Emerging Research Directions

7.1 Task and Resource Scheduling

https://ieeexplore.ieee.org/document/9519636 Q. Luo, S. Hu, C. Li, G. Li and W. Shi, "Resource Scheduling in Edge Computing: A Survey," in IEEE Communications Surveys & Tutorials, vol. 23, no. 4, pp. 2131-2165, Fourthquarter 2021, doi: 10.1109/COMST.2021.3106401. keywords: {Edge computing;Processor scheduling;Task analysis;Resource management;Cloud computing;Job shop scheduling;Internet of Things;Internet of things;edge computing;resource allocation;computation offloading;resource provisioning},

https://www.sciencedirect.com/science/article/abs/pii/S014036641930831X Congfeng Jiang, Tiantian Fan, Honghao Gao, Weisong Shi, Liangkai Liu, Christophe Cérin, Jian Wan, Energy aware edge computing: A survey, Computer Communications, Volume 151, 2020, Pages 556-580, ISSN 0140-3664, https://doi.org/10.1016/j.comcom.2020.01.004. (https://www.sciencedirect.com/science/article/pii/S014036641930831X) Abstract: Edge computing is an emerging paradigm for the increasing computing and networking demands from end devices to smart things. Edge computing allows the computation to be offloaded from the cloud data centers to the network edge and edge nodes for lower latency, security and privacy preservation. Although energy efficiency in cloud data centers has been broadly investigated, energy efficiency in edge computing is largely left uninvestigated due to the complicated interactions between edge devices, edge servers, and cloud data centers. In order to achieve energy efficiency in edge computing, a systematic review on energy efficiency of edge devices, edge servers, and cloud data centers is required. In this paper, we survey the state-of-the-art research work on energy-aware edge computing, and identify related research challenges and directions, including architecture, operating system, middleware, applications services, and computation offloading. Keywords: Edge computing; Energy efficiency; Computing offloading; Benchmarking; Computation partitioning

https://onlinelibrary.wiley.com/doi/10.1002/spe.3340 https://www.sciencedirect.com/science/article/abs/pii/S0167739X18319903 Wazir Zada Khan, Ejaz Ahmed, Saqib Hakak, Ibrar Yaqoob, Arif Ahmed, Edge computing: A survey, Future Generation Computer Systems, Volume 97, 2019, Pages 219-235, ISSN 0167-739X, https://doi.org/10.1016/j.future.2019.02.050. (https://www.sciencedirect.com/science/article/pii/S0167739X18319903) Abstract: In recent years, the Edge computing paradigm has gained considerable popularity in academic and industrial circles. It serves as a key enabler for many future technologies like 5G, Internet of Things (IoT), augmented reality and vehicle-to-vehicle communications by connecting cloud computing facilities and services to the end users. The Edge computing paradigm provides low latency, mobility, and location awareness support to delay-sensitive applications. Significant research has been carried out in the area of Edge computing, which is reviewed in terms of latest developments such as Mobile Edge Computing, Cloudlet, and Fog computing, resulting in providing researchers with more insight into the existing solutions and future applications. This article is meant to serve as a comprehensive survey of recent advancements in Edge computing highlighting the core applications. It also discusses the importance of Edge computing in real life scenarios where response time constitutes the fundamental requirement for many applications. The article concludes with identifying the requirements and discuss open research challenges in Edge computing. Keywords: Mobile edge computing; Edge computing; Cloudlets; Fog computing; Micro clouds; Cloud computing

https://www.sciencedirect.com/science/article/abs/pii/S1383762121001570 Akhirul Islam, Arindam Debnath, Manojit Ghose, Suchetana Chakraborty, A Survey on Task Offloading in Multi-access Edge Computing, Journal of Systems Architecture, Volume 118, 2021, 102225, ISSN 1383-7621, https://doi.org/10.1016/j.sysarc.2021.102225. (https://www.sciencedirect.com/science/article/pii/S1383762121001570) Abstract: With the advent of new technologies in both hardware and software, we are in the need of a new type of application that requires huge computation power and minimal delay. Applications such as face recognition, augmented reality, virtual reality, automated vehicles, industrial IoT, etc. belong to this category. Cloud computing technology is one of the candidates to satisfy the computation requirement of resource-intensive applications running in UEs (User Equipment) as it has ample computational capacity, but the latency requirement for these applications cannot be satisfied by the cloud due to the propagation delay between UEs and the cloud. To solve the latency issues for the delay-sensitive applications a new network paradigm has emerged recently known as Multi-Access Edge Computing (MEC) (also known as mobile edge computing) in which computation can be done at the network edge of UE devices. To execute the resource-intensive tasks of UEs in the MEC servers hosted in the network edge, a UE device has to offload some of the tasks to MEC servers. Few survey papers talk about task offloading in MEC, but most of them do not have in-depth analysis and classification exclusive to MEC task offloading. In this paper, we are providing a comprehensive survey on the task offloading scheme for MEC proposed by many researchers. We will also discuss issues, challenges, and future research direction in the area of task offloading to MEC servers. Keywords: Multi-access edge computing; Task offloading; Mobile edge computing; Survey

7.2 Edge for AR/VR

7.3 Vehicle Computing

7.4 Energy-Efficient Edge Architectures

The exponential growth of Internet of Things (IoT) devices, combined with the rise of artificial intelligence (AI) and advanced communication networks like 5G and 6G, is driving the expansion of edge computing. In this model, data processing shifts from centralized cloud data centers to nodes closer to the data source or end-users. This architectural shift reduces network latency, optimizes bandwidth usage, and enables real-time analytics. However, distributing processing across many geographically dispersed devices also has significant implications for energy consumption. While large data centers have been extensively studied for energy efficiency, smaller edge nodes, such as micro data centers, IoT gateways, and embedded systems, also contribute notably to carbon emissions.

High-Level Edge-Fog-Cloud Architecture

Modern IoT and AI systems often rely on an edge-fog-cloud architecture. Data collected by IoT sensors typically undergoes initial processing at edge nodes or micro data centers. This local processing minimizes the data volume that must be sent to the cloud, thereby reducing network congestion and latency. Intermediate fog nodes can then aggregate data from multiple edge devices for further analysis or buffering, while centralized cloud data centers handle large-scale storage and intensive computational tasks.

Lifecycle of an Edge Device

Evaluating the carbon footprint of edge devices requires considering their entire lifecycle. The manufacturing phase often involves substantial energy consumption and the use of raw materials. During deployment, the energy efficiency of operation including effective cooling, is critical. Ongoing maintenance and updates can extend a device's lifespan, while end-of-life disposal or recycling presents further environmental challenges. Each stage of the lifecycle offers opportunities for reducing carbon emissions through measures such as modular upgrades, the use of recycled materials, and environmentally responsible disposal practices.

Hardware-Level Approaches

Efforts to reduce the carbon footprint at the edge have explored various hardware-focused strategies. These include the design of system on-chips (SoCs) with ultra-low power states and selective core activation, and the use of heterogeneous multicore processors that activate only the necessary cores to meet performance demands. The development of custom AI accelerators has also shown promise in significantly lowering power consumption during neural network inference. In addition, sustainable manufacturing practices and the incorporation of recycled materials, along with innovative packaging solutions inspired by biological systems and compact liquid-cooling methods, contribute to reducing overall energy use.

| Study | Key Focus | Contributions | Findings |

|---|---|---|---|

| Template:Cite | Ultra-low-power SoC design | Introduced SoC with power gating and selective core activation | Demonstrated a significant reduction in idle power consumption |

| Template:Cite | Heterogeneous multicore processors | Proposed activating only necessary cores for real-time tasks | Showed improved balance of performance and energy usage |

| Template:Cite | Custom AI accelerators | Developed specialized hardware for on-device inference | Reported substantial energy savings in neural network operations |

| Template:Cite | Sustainable manufacturing | Employed life-cycle assessments and recycled materials | Achieved a measurable decrease in overall manufacturing emissions |

| Template:Cite | Biologically inspired packaging | Integrated biomimetic materials for enhanced heat dissipation | Reduced cooling energy overhead and improved thermal performance |

Software-Level Optimizations

Energy-aware software design plays a critical role in enhancing sustainability at the edge. Techniques such as dynamic voltage and frequency scaling (DVFS) adjust system performance based on workload fluctuations, thereby improving power efficiency. Advances in DVFS now include adaptive approaches that incorporate machine learning to fine-tune voltage and frequency settings dynamically. Additionally, task scheduling algorithms that distribute computing workloads among heterogeneous IoT gateways balance performance, latency, and energy consumption. Partial offloading techniques have also emerged, where computationally intensive parts of a task are offloaded to specialized infrastructure while simpler processes are handled locally. Lightweight containerization further minimizes resource overhead during deployment.

| Study | Contribution | Findings | Implications |

|---|---|---|---|

| Template:Cite | Proposed an edge-fog-cloud migration framework | Demonstrated dynamic workload relocation based on resource availability | Highlighted potential for reduced overall carbon footprint |

| Template:Cite | Developed sleep-mode protocols for 5G base stations | Showed drastic energy reduction during off-peak usage | Enabled significant cost savings and lowered emissions |

| Template:Cite | Introduced carbon-intensity-aware scheduling | Aligned workload placement with regional grid data | Improved sustainability in multi-tier edge-fog-cloud environments |

| Template:Cite | Proposed standardized carbon footprint metrics | Offered a uniform reporting structure for edge infrastructures | Facilitated consistent policy and regulatory compliance |

| Template:Cite | Analyzed economic incentives for green edge computing | Demonstrated effectiveness of tax benefits and carbon credits | Encouraged broader adoption of low-power SoCs and practices |

System-Level Coordination and Policy Frameworks

Reducing the carbon footprint in edge computing requires coordinated efforts across hardware, network, and management layers. Integrated edge-fog-cloud architectures facilitate workload migration across nodes based on resource availability and carbon intensity variations. Adaptive networking protocols, such as those that enable sleep modes during off-peak hours or consolidate workloads among neighboring gateways, further enhance energy efficiency. AI-based methods now play an increasingly important role by predicting resource utilization and carbon intensity to enable proactive power management.

Policy and regulatory frameworks also contribute significantly by promoting standardized carbon footprint metrics and setting minimum energy efficiency levels for edge devices. Incentives like carbon credits and tax benefits support the transition to greener technologies, while eco-design principles—such as modular battery packs and real-time energy monitoring—help extend hardware lifespans and reduce electronic waste.

Key Strategies for Reducing Carbon Emissions

Effective strategies for mitigating carbon emissions in edge computing span multiple system layers. On the hardware front, deploying ultra-low-power SoCs, optimizing chip layouts, and adopting innovative packaging materials can substantially reduce power consumption. Complementary software approaches include power-aware scheduling, partial offloading of computational tasks, and containerized orchestration with minimal resource overhead. AI-driven coordination helps predict workload spikes, carbon intensity variations, and thermal thresholds, allowing systems to scale resources proactively.

Integrated measures for carbon footprint reduction also involve incorporating localized renewable energy sources—such as solar or wind power—at edge sites. Although practical deployment may face challenges in certain regions, these initiatives, together with supportive government policies and industry standards, are pivotal in driving sustainable practices across the sector.

| Dimension | Techniques / References | Contributions | Findings |

|---|---|---|---|

| Hardware | Low-power SoCs Template:Cite, AI accelerators Template:Cite | Minimized idle power and specialized hardware for inference | Achieved notable reductions in power usage for diverse workloads |

| Software | DVFS with RL Template:Cite, partial offloading Template:Cite | Dynamically adjusted CPU frequency and partitioned compute tasks | Demonstrated adaptive energy savings under varying load conditions |

| System Orchestration | Edge-fog-cloud migration Template:Cite, container optimization Template:Cite | Relocated tasks across network layers and used lightweight virtualization | Showed improved resource utilization and reduced operational overhead |

| Policy/Regulation | Carbon credits Template:Cite, standardized metrics Template:Cite | Encouraged or mandated greener practices through financial/reporting mechanisms | Facilitated consistent adoption of sustainability measures across stakeholders |

Open Challenges

Despite significant progress, challenges remain. The heterogeneity of edge devices complicates the implementation of uniform energy-saving strategies. The inconsistent availability of energy monitoring and carbon-intensity data across different regions hinders real-time optimizations. Additionally, critical applications such as autonomous vehicles or healthcare systems face trade-offs between reliability and energy efficiency, where any disruption or delay is unacceptable. Diverse policy frameworks across regions further complicate regulatory compliance for global operators. Finally, security and privacy concerns related to AI-driven power management and data collection must be addressed to prevent potential vulnerabilities.

Future Directions

Emerging approaches such as federated learning for energy management offer promising avenues for distributed collaboration without compromising data privacy. Cross-layer co-design—integrating hardware, operating system functions, and application-level optimizations—could yield greater efficiency improvements than isolated strategies. The development of dynamic carbon-aware energy markets, where edge nodes schedule tasks based on real-time energy prices and carbon intensity, presents an innovative framework for sustainable resource allocation. Standardized metrics and benchmarking tools, akin to data center Power Usage Effectiveness (PUE), are also needed to enable consistent comparisons and drive further advancements in edge computing sustainability.