Machine Learning at the Edge: Difference between revisions

No edit summary |

|||

| Line 113: | Line 113: | ||

'''Dynamic Allocation:''' As mentioned above, continuously monitoring the workload and computational usage of devices is very useful, as allocation can be done not just based on the size or complexity of the data, but by which devices are free at that moment. | '''Dynamic Allocation:''' As mentioned above, continuously monitoring the workload and computational usage of devices is very useful, as allocation can be done not just based on the size or complexity of the data, but by which devices are free at that moment. | ||

=='''References'''== | =='''References'''== | ||

Revision as of 21:23, 24 April 2025

Machine Learning at the Edge

4.1 Overview of ML at the Edge

Introduction

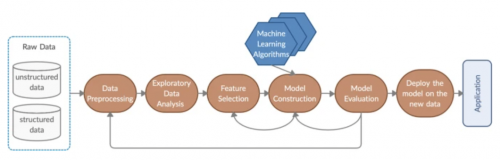

Machine Learning is a branch of Artificial Intelligence that is primarily concerned with the training of algorithms to look at sets of data, analyze patterns, and generate conclusions so that the gathered data can be used to generate results and carry out tasks. As an application, machine learning is especially relevant when considering edge computing [7]. Given the recent prevalence and rise in the field of machine learning, it is inevitable that deployment of machine learning algorithms will play a crucial role in the function of edge computing systems as well. The abundant sensor networks often involved in edge systems provide a means of gathering very large amounts of data. With the help of machine learning, such data can be utilized in very effective ways and automation methods can be employed to improve efficiency. Machine learning on edge devices themselves can help ease the burden on cloud systems and the networks connected to them as well, especially if the model does need such large computational power such as in the case of certain Small Language Models (SLMs). However, the main issue regarding machine learning at the edge lies in the devices, which may have limited computational power, and the environment, which may change dynamically and be effected by network congestion, device outages, or other unexpected events. If these challenges can be overcome, edge systems could play a pivotal role in advancing machine learning and utilize it to maximize efficiency.

Benefits of Machine Learning using Edge Computing

Data Volumes: Traditional cloud computing architecture may not be able to keep up with the massive volume of data that is often generated by IoT devices and sensors, thus increasing costs as well as pressure and congestion on the networks transmitting data [5]. With edge computing architecture, machine learning can be deployed in a distributed nature, allowing ML models to be deployed and trained across many edge devices. The nature of edge devices sharing computational tasks makes it perfect for taking large datasets and splitting the computational load so that many devices can compute what a single device might not have been able to handle [5]. More than just splitting input data across edge devices, machine learning models can be split up across edge devices as well. This allows for deployment of models that would be otherwise too large to be deployed on a single edge device with limited memory. In such cases, edge nodes are able to collaboratively pass data between them, and the global ML model is later updated based on the workings of each smaller portion [5]. Additionally, the sheer amount of data that processed from edge devices means more training data for machine learning models, which generally increases accuracy, and thus edge computing could be an effective solution to providing lots of good and relevant data for a variety of applications.

Lower Latency: For certain real-time applications, such as Virtual and Augmented Reality(VR/AR) or smart cars, the latency required to transmit data and process it to the cloud may be too high to make these applications efficient and safe. A key benefit of edge computing lies in the reduced latency provided by putting devices closer to the users, and this is applicable to machine learning as well [5].

Enhanced privacy: Processing data locally rather than on the cloud reduces the risk of data theft and enhances privacy. The data does not have to be sent to anyone else, or go over a network to potentially get compromised. This is crucial given the large amounts of data needed for machine learning, and especially if personal data is needed for a personal application, many users would prefer the privacy done by having it processed locally [5].

Challenges of Machine Learning Using Edge Computing

Potentially Low Computational Power: Many edge devices lack the computational power needed for needed for deep learning applications, especially given the large amount of data and complex operations that may have to take place [1].

Energy Management: Given that edge devices may consist of sensors, or other devices that are meant to have low energy consumption, the tasks associated with machine learning could quickly drain their power, even if they have sufficient power to run the workloads [6].

New Attack Surfaces: With more devices comes the increased potential for malicious hackers to steal data or compromise devices, despite the enhanced privacy. Encryption, access controls, and other methodologies must be employed to mitigate the potential for new attack surfaces to be exploited [6].

Applications for ML at the Edge

The utilization of data to make conjectures about what is going on in the environment and how to respond has a variety of use cases that can greatly benefit people, cities, and the environment. By leveraging and monitoring a constant stream of data and training machine learning models to detect or even respond to different events, there can be many practical applications for such systems. These would rely on the combination of edge devices and machine learning to better enhance experience for users and detect events of interest.

Self-Driving Cars: Imitation learning can be leveraged to better understand and emulate human driving. The low latency that can be provided by edge computing is especially useful for the quick decision making needed by these systems [7].

Smart Home Devices: Understanding user habits by leveraging the available data for them can make smart homes more convenient for users. The increased privacy that can come with edge computing, along with a few extra cybersecurity measures, can ensure the personal data that may be used for training is not compromised.

Environmental and Industrial Monitoring: Sensors deployed in environments and industrial settings could be trained to recognize when there are anomalies or undesirable behavior, thus ensuring a quick response and active information sending [7].

Smart Cities: Similar to above, the data collected by sensors in cities can leverage machine learning to help in crime and emergency detection, traffic management, or energy management[7].

4.2 ML Training at the Edge

Machine Learning (ML) training at the edge is basically the process of developing, updating, or fine-tuning ML models directly on edge devices like on smartphones, IoT sensors, wearables, and other embedded systems instead of only depending on centralized cloud infrastructure. This approach is becoming a lot more important as the demand for real-time, personalized AI applications continues to grow. By being able to train models closer to where the data is generated, edge-based ML enables faster responses, helps reduce latency, and enhances user privacy by minimizing the need to transmit sensitive data to the cloud. It’s also especially useful in scenarios where devices operate in environments with limited or unreliable network connectivity, allowing them to function more efficiently.

Benefits:

One significant advantage of training ML models directly on edge devices is reduced latency. By processing data locally, devices can make immediate decisions without the delays caused by transmitting data back and forth to cloud servers. This immediate responsiveness is extremely important for applications like real time health monitoring, autonomous driving, and industrial automation.

Additionally, training machine learning models at the edge significantly enhances user privacy. Since sensitive data can be processed and stored directly on the user's device rather than being sent to centralized cloud servers, the risk of data breaches or unauthorized access during transmission is reduced by a lot. This local data handling is able to prevent exposure of personal or confidential information, providing users greater control over their data. Edge-based training naturally aligns with privacy regulations such as the General Data Protection Regulation (GDPR), which emphasizes strict data security, transparency, and explicit user consent. By keeping personal data localized, edge training not only improves security but also helps organizations easily comply with privacy laws, protecting users’ rights and maintaining trust.

Efficiency and resilience are important benefits of edge training. By training machine learning models directly on edge devices, these devices become capable of processing data locally without relying on constant internet connectivity. This local processing allows edge devices to continue operating effectively even in environments where network connections are weak, unstable, or completely unavailable. Because they are not fully dependent on cloud infrastructure, edge devices can quickly adapt to changes, respond in real-time, and update their ML models based on immediate local data. As a result, edge training ensures reliable performance and uninterrupted operation, making it particularly valuable for remote locations, emergency scenarios, and harsh environments where cloud-based solutions might fail or become unreliable. Research Papers

An important contribution to the understanding of machine learning (ML) training at the edge is the research paper "Making Distributed Edge Machine Learning for Resource-Constrained Communities and Environments Smarter: Contexts and Challenges" by Truong et al. (2023). This paper focuses on training ML models directly on edge devices in communities and environments facing limitations, such as unstable network connections, limited computational resources, and scarce technical expertise. The authors emphasize the necessity of developing context-aware ML training methods specifically tailored to these environments. Traditional centralized ML training methods often fail to operate effectively in such constrained settings, highlighting the need for decentralized, localized solutions. Truong et al. explore various challenges, including managing data efficiently, deploying suitable software frameworks, and designing intelligent runtime strategies that allow edge devices to train models effectively despite limited resources. Their work points out significant research directions, advocating for more adaptable and sustainable ML training solutions that genuinely reflect the technological and social contexts of resource-limited environments.

Tools and Frameworks:

Frameworks like TensorFlow Lite, PyTorch Mobile, and Edge Impulse are designed to support edge-based model training and inference. These tools allow developers to build and fine-tune models specifically for deployment on low-power devices.

Technical Challenges:

Despite its advantages, ML training at the edge presents challenges, including limited processing power, memory constraints, and energy efficiency. Edge devices often lack the computational resources of cloud servers, requiring lightweight models, optimized algorithms, and energy-efficient hardware.

Real World Applications: A well known example is Apple’s use of on-device training for personalized voice recognition with Siri. Instead of uploading user voice data to the cloud, Apple uses local training to improve accuracy over time while maintaining user privacy.

Dealing With Challenges

Despite the challenges, of ML at the edge, there are a variety of methods that can be used to provide a more efficient means of training, and making the heavy workloads compatible with even the limited computing power of certain edge devices.

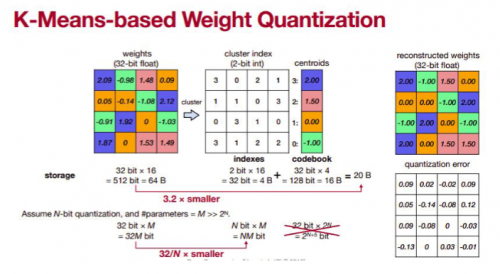

Quantization: Quantization is a method that involves reducing the precision of numbers, and thus easing the burden of computational power as well as memory management on the edge devices. There are multiple forms of quantization, but each one essentially sacrifices some precision - enough so that accuracy is mostly maintained but the numbers are easier to handle. For example, converting from floating point to integer datatypes means significantly less memory is used, and the differences for some models in the precision may be negligible. Another example is K-means based Weight Quantization, which involves creating a matrix and grouping similar numbers together with centroids. An example is shown below:

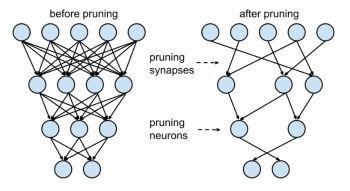

Pruning: This is a method often used in neural networks, in which the least important connections between different nodes and layers are taken out to remove the overall computation. Certain links between the layers are much more important than others and therefore only some will significantly affect the accuracy. By cutting out the least necessary ones, the same accuracy and training can be achieved with much less computation. This can also be applied to entire neurons in the neural network which are seen as the least significant.

4.3 ML Model Optimization at the Edge

The Need for Model Optimization at the Edge

Given the constrained resources, along with the inherently dynamic environment that edge devices must function in, model optimization is a crucial part of machine learning in edge computing. The current most widely used methodology consists of simply specifying an exceptionally large set of parameters, and giving it to the model to train on. This can be feasible when hardware is very advanced and powerful, and is necessary for systems such as Large Language Models (LLMs). However, this is no longer viable when dealing with the devices and environments at the edge. It is crucial to identify the best parameters and training methodology so as to minimize the amount of work done by these devices, while compromising as little as possible on the accuracy of the models. There are multiple ways to this, and they include either optimization or augmentation of the dataset itself, or optimization of the partition of work among the edge devices.

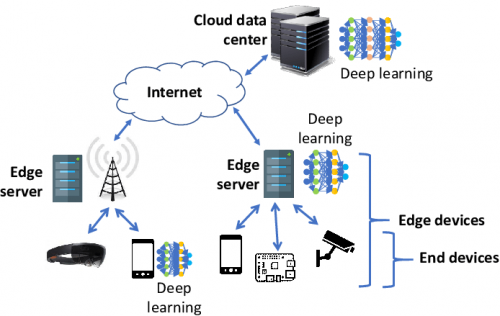

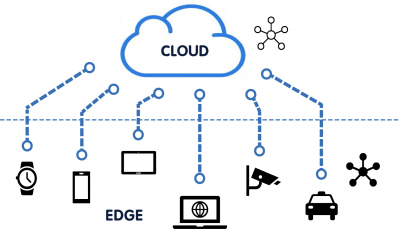

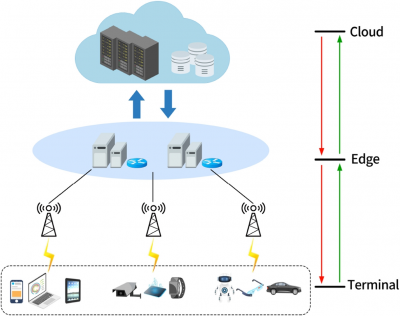

Edge and Cloud Collaboration

One methodology that is often used involves collaboration between both Edge and Cloud Devices. The cloud has the ability to process workloads that may require much more resources and cannot be done on edge devices. On the other hand, edge devices, which can store and process data locally, may have lower latency and more privacy. Given the advantages of each of these, many have proposed that the best way to handle machine learning is through a combination of edge and cloud computing.

The primary issue facing this computing paradigm, however, is the problem of optimally selecting which workloads should be done on the cloud and which should be done on the edge. This is a crucial problem to solve, as the correct partition of workloads is the best way to ensure that the respective benefits of the devices can be leveraged. A common way to do this, is to run certain computing tasks on the necessary devices and determine the length of time and resources that it takes. An example of this is the profiling step done in EdgeShard [1] and Neurosurgeon [4]. Other frameworks implement similar steps, where the capabilities of different devices are tested in order to allocate their workloads and determine the limit at which they can provide efficient functionality. If the workload is beyond the limits of the devices, it can be sent to the cloud for processing

The key advantage of this is that it is able to utilize the resources of the edge devices as necessary, allowing increased data privacy and lower latency. Since workloads are only processed in the cloud as needed, this will reduce the overall amount of time needed for processing because data is not constantly sent back and forth. It also allows for much less network congestion, which is crucial for many applications.

Optimizing Workload Partitioning

The key idea for much of the optimization done in machine learning on edge systems involves fully utilizing the heterogenous devices that are often contained in these systems. As such, it is important to understand the capabilities of each device so as to fully utilize its advantages. Devices can very greatly from smartphones with more powerful computational abilities to raspberry pis to sensors. More difficult tasks are offloaded to the powerful devices, while simpler tasks, or models that have been somewhat pretrained can be sent to the smaller devices. In some cases, as in Mobile-Edge [2], the task may be dropped altogether if the resources are deemed insufficient. In this way, exceptionally difficult tasks do not block tasks that have the ability to be executed and therefore the system can continue working.

Dynamic Models

Given the dynamic nature of the environments that edge devices must function, as well as the heterogeneity of the devices themselves, a dynamic model of machine learning is often employed. Such models must keep track of the current available resources including computation usage and power, as well as network traffic. These may change very often depending on the workloads and devices in the system. As such, training models to continuously monitor and dynamically distribute the workloads is a very important part of optimization. Simply offloading larger tasks to more powerful devices may be obsolete if the devices has all of its computing resources or network capabilities being used up by another workload.

The way this is commonly done is by using the profiling step described above as a baseline. Then, a machine learning model utilizes the data to estimate the performance of devices and/or layers. During runtime, a similar process is employed which may update the data used and help the model refine its predictions. Network traffic is also taken into account at this stage in order to preserve the edge computing benefit of providing lower latency. Using all of this data and updates at runtime, the partitioning model is able to dynamically distribute workloads at runtime in order to optimize the workflow and ensure each device is utilizing its resources in the most efficient manner. 2 very good examples of how such a system is specifically deployed are the Neurosurgeon and EdgeShard systems, shown above.

Horizontal and Vertical Partitioning

There are 2 major ways that these models split the workloads in order to optimize the machine learning: Horizontal and vertical partitioning [3]. Given a set of layers that ranges from the cloud to edge, horizontal partitioning involves splitting up the workload between the layers. For example, if a large amount of computational resources is deemed necessary, this task may go to the cloud to be completed and preprocessed. One the other hand, if a small amount of computational power is required, this type of work can go to edge devices. Such partitioning also depends on the confidence and accuracy level of the given learning. If the accuracy is completed on an edge device and found to be very low, it can be sent to the cloud; on the other hand if the accuracy is already fairly high and the learning model needs smaller work to reach the threshold deemed acceptable, it may be sent to edge devices to free up network traffic on the cloud and reduce latency [3].

The second model of partitioning is called vertical partitioning. This involves splitting among the devices within a certain layer rather than among the layers themselves. This is similar to what has been described in previous sections, as it allows a means for fully utilizing the heterogenous abilities that are found in edge devices. Similar functionality and determination to what is found in horizontal partitioning is done, but all of the devices that the workload is split across function on the same layer [3]. To fully optimize a machine learning model, both horizontal and vertical partitioning must be used.

Methods of Training Optimization

Data Selection: Certain types of data may be more useful than others, and therefore the ones that most affect the accuracy of a model can be offloaded to edge devices. This decreases the workload on them because less data must be processed, while also conserving the accuracy of the model as much as possible. Some larger data may not be needed, such as only putting Small Language Models on edge devices that can handle simple commands and prompts, rather than having to offload an entire LLM onto the device, which may overload its computational abilities and not provide enough use.

Data Compression: Data can be compressed to a smaller form in order to fit the constraints of edge devices. This is especially true given their limited memory, and also makes the workload smaller. Quantization, discussed previously, is a prevalent example of this.

Container Workloads: Container workloads can be very useful, as they provide all resources the device needs for processing the work. By examining the computational abilities of the device, different sized workloads can be allocated as deemed necessary to maximize the efficiency of the training.

Dynamic Allocation: As mentioned above, continuously monitoring the workload and computational usage of devices is very useful, as allocation can be done not just based on the size or complexity of the data, but by which devices are free at that moment.